Auto logout in seconds.

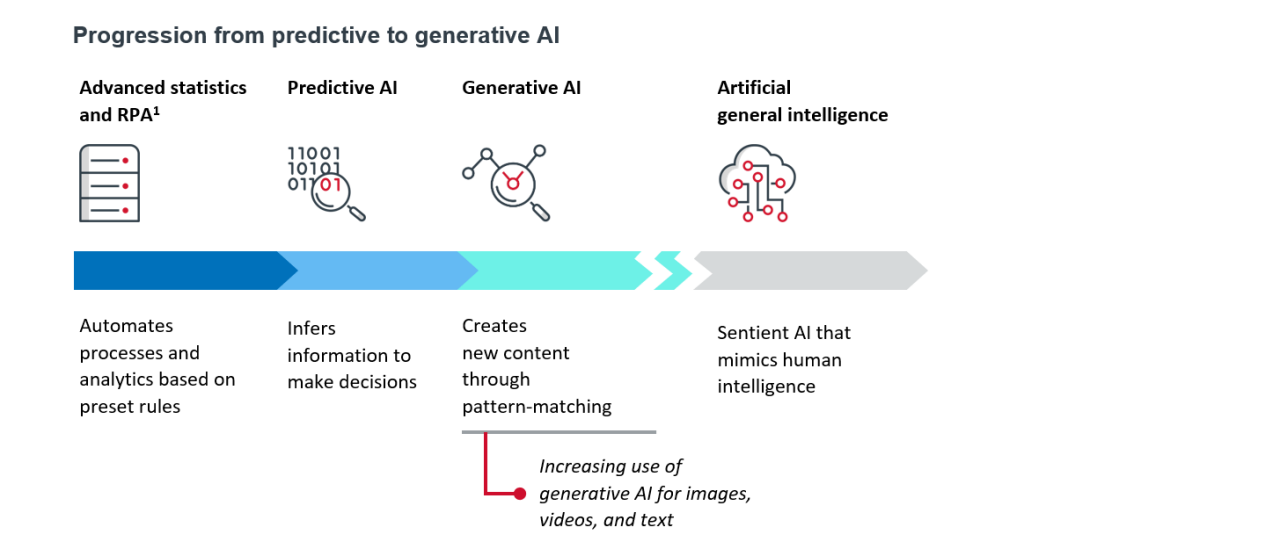

Continue LogoutAI is a range of specialized tools that can perform specific tasks using algorithms, pattern matching, and other techniques. Although performing these tasks can make AI seem like a “human” intelligence, it must be trained on data, which can range from databases to interactions with users. The more data AI has access to, the more accurate its predictions become.

AI is not sentient and can’t perform tasks autonomously — and because each AI model has limited utility outside the task for which it was designed, it can’t be used to replace clinicians or staff. In fact, there is a big leap between the generative AI that is possible today and the human-like intelligence that we often imagine AI to be.

Although AI has the potential to become an existential threat for organizations, it could also solve many of healthcare’s most difficult problems — if health systems are willing to tackle tough challenges and ensure responsible use.

- Healthcare organizations are already using AI to solve challenges related to patient care, clinician burnout, and recruitment and retention. For example, Nuance’s Dragon Ambient eXperience (DAX) copilot records interactions between patients and providers, then converts them into clinical documentation — which then goes through quality review and physician sign-off. After implementation, 80% of patients felt their physician was more focused, and there was a 40% increase in first-time approval of prior authorizations.

- AI solutions can also help analyze and interpret healthcare data at scale. GRAIL’s AI tool, Galleri, has allowed GRAIL to use liquid biopsies to detect more than 50 different types of cancer in asymptomatic patients. In the past, liquid biopsies have been difficult to scale, but if the Galleri study is successful, more than 1 million people in the UK could have access in 2024-2025.

- If used strategically, AI has the potential to mitigate healthcare bias. When trained on diverse data sets, an algorithmic prediction (ALG-P) model allowed researchers to identify a disparity in care: Using ALG-P, researchers were able to correlate a specific radiographic appearance of the knee with severe pain in Black patients. Because that correlation had gone unrecognized, Black patients have been less likely to be offered surgery for severe knee pain. ALG-P was thus able to capture a more accurate picture of patient pain and potentially open avenues to better treatment for marginalized populations.

That said, AI adoption is not without its challenges. While some challenges occur with most technological advances, certain challenges — and their solutions — are specific to AI:

Data bias. AI models are in danger of encoding bias from biased training data, resulting in the under- or misrepresentation of marginalized groups, delivery of incorrect medical information to clinicians and patients, and repeated patterns of discrimination from algorithms learning and reinforcing inequities.

Take care to use (or use companies that use) diverse data sets and consider how your organization will monitor and manage data outputs.

Data privacy. Collecting data is needed to have a good, equitable interactions, but it also runs the risk of exposing protected health information and intellectual property.

Consider using internal (or closed) versions of generative AI, remove protected health information from patient data, create guidelines on how and when to collect data, and educate the workforce about the risks of sharing sensitive data.

Transparency and explainability. AI has a black-box problem, meaning that users can’t see how and why deep learning algorithms work, leading to improper use or distrust in AI outputs.

Provide some transparency around data collection and management to help users understand what data the AI will use to generate outputs.

Data accuracy and reliability. AI inaccuracies can have a larger impact than human error, because they are more likely to happen at scale. Data bias, incomplete or inaccurate training data, overfitting, and hallucinations can lead to a loss of trust among providers and patients, patient mistreatment, and worsened healthcare inequities.

Establish systems of accountability in case of AI-caused harm.

Liability. When AI creates medical errors, it can create liability for organizations.

Track new U.S. legal rulings and regulations, put systems in place to establish accountability in case of AI-caused harm, and instill guidelines on which AI technologies are acceptable at your organization.

AI isn’t a silver bullet: Incorporating it effectively into an overall strategy takes time and consideration. Grounding AI adoption and governance in the strategies you already have in other areas of your organization will keep AI-specific policies laser-focused on your purpose and goals.

Rather than starting the conversation with which AI technology you need, focus first on the goals you want to achieve. For example, if the goal is to improve patient experience and its root cause is patients feeling unheard by clinicians, you might consider implementing ambient listening and automated note summaries so that clinicians can start prioritizing patient interactions over notetaking.

The potential tensions and trade-offs of AI implementation should also be a factor in decision-making. To illustrate, automated notetaking will need systems in place so that clinical notes can be verified by clinicians, which will require increased work during the planning and implementation process. It may also be difficult to measure the impact of AI on improving patient experience, because capturing whether patients feel heard in unstructured conversations with clinicians could be considered subjective.

Once you’ve decided whether and how specific AI technologies fit into existing strategy, create a process of evaluation, governance, and change management by seeking guidance from inside and outside of your organization, relying on internal expertise, actively approaching responsible and ethical AI use, and systematically communicating with your organization about AI implementation.

Steps to incorporate AI into your existing strategy

- Review and adjust your 5-year strategic plan and consider which departments are most amenable to change.

- Identify your business challenges and consider how AI technologies can address them.

- Include the potential uses and benefits of AI in strategic planning scenarios and outlooks.

- Ensure leaders are on board to help guide your decision-making and approach to AI.

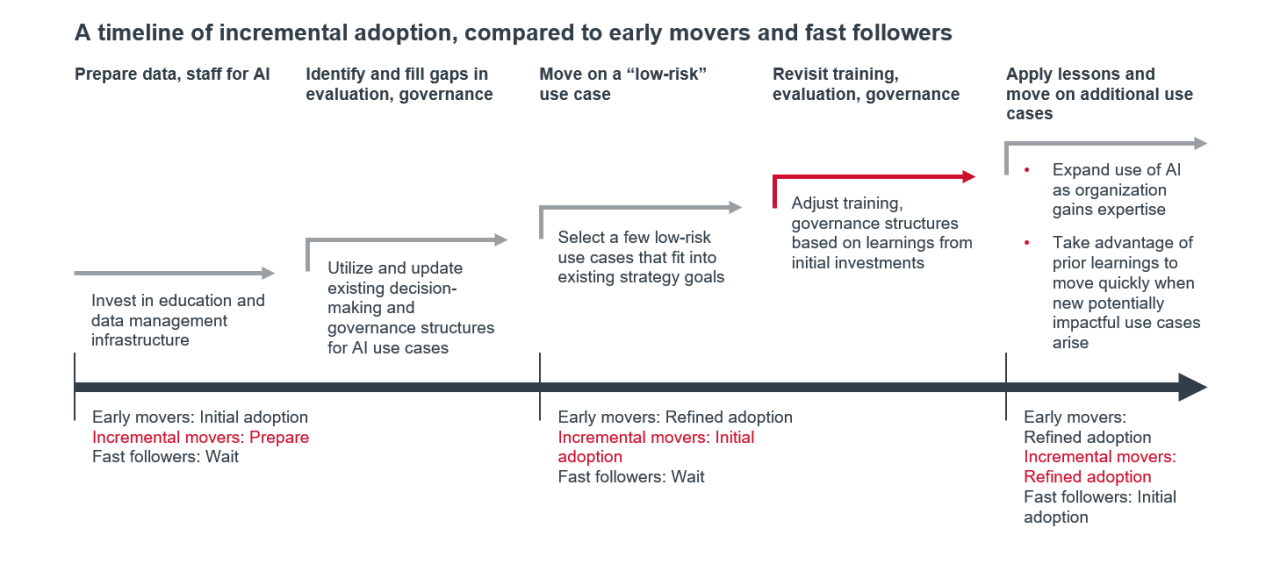

Early adoption of AI can come with benefits such as efficiency, cost-effectiveness, more time to build culture and literacy around AI, attractiveness to physicians who want less administrative work, and a reputation/marketing advantage. However, it can also come with a lot of uncertainty and risk. Organizations that plan move too quickly can lose money on investments, struggle to develop new processes for tech pilots, and fail to learn quickly and pivot to new strategies when necessary. On the other hand, organizations that wait to plan for AI implementation run the risk of missing out on its benefits and being blindsided when unexpected challenges arise.

While success can be had as an early adopter or fast follower, both paths come with significant challenges. Incremental innovation is likely where most organizations should strive to be when it comes to AI. Taking an incremental approach to AI technologies can help organizations meet those challenges systematically, leaving you prepared to realize the long-term benefits of AI when your organization is ready while mitigating the risks of investment. The below chart shows different AI investment points and where early movers, fast followers, and incremental adopters fall on the timeline to AI adoption:

WellSpan Health’s vision is to reimagine healthcare through the delivery of comprehensive, equitable health and wellness solutions throughout our continuum of care. As an integrated delivery system focused on leading in value-based care, we encompass more than 2,000 employed providers, 220 locations, eight award-winning hospitals, home care and a behavioral health organization serving South Central Pennsylvania and northern Maryland. With a team 20,000 strong, WellSpan experts provide a range of services, from wellness and employer services solutions to advanced care for complex medical and behavioral conditions. Our clinically integrated network of 2,600 aligned physicians and advanced practice providers is dedicated to providing the highest quality and safety, inspiring our patients and communities to be their healthiest.

This report is sponsored by WellSpan Health, an Advisory Board member organization. Representatives of WellSpan Health helped select the topics and issues addressed. Advisory Board experts wrote the report, maintained final editorial approval, and conducted the underlying research independently and objectively. Advisory Board does not endorse any company, organization, product or brand mentioned herein.

This report is sponsored by WellSpan Health. Advisory Board experts maintained final editorial approval, and conducted the underlying research independently and objectively.

Don't miss out on the latest Advisory Board insights

Create your free account to access 1 resource, including the latest research and webinars.

Want access without creating an account?

You have 1 free members-only resource remaining this month.

1 free members-only resources remaining

1 free members-only resources remaining

You've reached your limit of free insights

Become a member to access all of Advisory Board's resources, events, and experts

Never miss out on the latest innovative health care content tailored to you.

Benefits include:

You've reached your limit of free insights

Become a member to access all of Advisory Board's resources, events, and experts

Never miss out on the latest innovative health care content tailored to you.

Benefits include:

This content is available through your Curated Research partnership with Advisory Board. Click on ‘view this resource’ to read the full piece

Email ask@advisory.com to learn more

Click on ‘Become a Member’ to learn about the benefits of a Full-Access partnership with Advisory Board

Never miss out on the latest innovative health care content tailored to you.

Benefits Include:

This is for members only. Learn more.

Click on ‘Become a Member’ to learn about the benefits of a Full-Access partnership with Advisory Board

Never miss out on the latest innovative health care content tailored to you.